Truth-seeking AI Accelerates: 40+ New Builders Join the Mission (August 2025)

Plus: top reads for philosopher-builders and a new Editorial Lead

Dear Cosmos community,

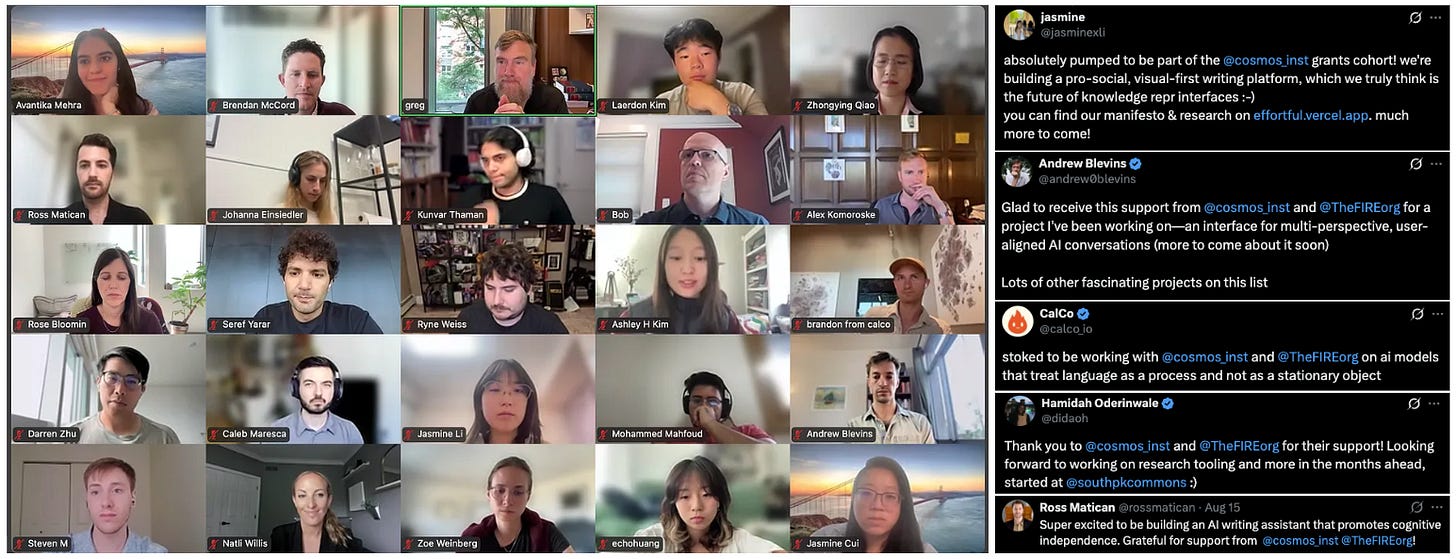

This month we awarded 40+ new grants including our first cohort of AI x Truth-seeking projects (apply by August 31st for the next wave), welcomed our new Editorial Lead, and saw new research and ideas from across the Cosmos network.

Next month Brendan will be giving the Adam Smith Lecture at Panmure House in Edinburgh. We’re running a (<300 word) essay competition for two people to win travel and accommodation to attend the lecture and a VIP dinner. Apply now.

We recently wrote about why we need philosopher-builders, technologists who create in service of human flourishing. Here’s how we’re developing and supporting them👇

🏗️ Grants

Earlier this month Cosmos and FIRE had a welcome call with our inaugural 27 AI x truth-seeking grant winners, many of them featured below. See the full list of winners.

For the next round we’re keen to support ideas focused on a J.S. Mill-inspired “marketplace of ideas” approach to truth-seeking. With that in mind we’re particularly interested in pitches on:

Community Notes approaches to information verification that retain human judgment (e.g., building on work aiming to scale human judgment via LLMs)

Censorship-resistant formats (e.g., containers like Szdt—a project by Cosmos grantee Gordon Brander)

Identification of AI outputs to establish idea provenance (e.g., projects like Reality Defender or GPTZero)

We also accepted 15 additional grantees to the Cosmos community, working on different projects building AI for human flourishing.

General track winners:

Benjamin Brast-McKie to work on accurate self-reflection and transparent reasoning in AI systems

Benjamin Laufer to reveal hidden “family trees” of ideas, biases and quirks that spread through fine-tuning pipelines into AI models.

German Reyes to track how AI impacts critical thinking in higher education.

Mario Giulianelli to test AI systems for emergent self-interest through the use of non-cooperative dialog games.

Nathan Lubchenco to figure out when models are hallucinating by analyzing sets of responses at different levels of randomness.

Tasha Pais to develop scalable interpretability tools for reasoning AI models.

Xiaoyao Chi to collect emotion-related idioms across languages while comparing AI and human emotional nuance.

Younesse Kaddar to help make AI reasoning more transparent by turning natural language into statistical models.

Oxford philosophy x AI seminar track winners:

Botao Amber Hu to develop an AI-based approach to help find conceptual art that could become cultural focal points.

Elian McCarron to build an AI tool for humanities and social science researchers using token authentication.

Francesco Cipriani to build a digital tool that focuses on patient individuality and autonomy, and aids psychotherapists in the treatment of mental health conditions.

James Coslett to develop a sentence-level word processor that allows the drafting and review of claims by discussion.

Jake Reid and Niclas Göring to build a live website that helps synthesise the content of parliamentary debates clearly and transparently.

Wessel Vinke to benchmark LLMs abilities to understand when they are evaluating claims that are inherently vague vs precise.

👥 Team

We’ve been thrilled to see engagement with philosophy and AI through our writings, open-source reading lists, and contributor essays on Substack.

To help develop this as well as other editorial projects we’re excited to have Harry Law join our team as Editorial Lead.

Some pieces we’ve loved from Harry: Against Cultural Alignment, The Fly and the Filter, and Reflections on AGI from 1879. He was previously a senior researcher at Google DeepMind where he worked on policy, safety, and ethics. His work has appeared in outlets including Time, The Guardian, The Economist, WIRED, MIT Tech Review, Nature, New Scientist, and more.

📖 Seminars

In July, we wrapped up our fifth seminar of 2025: with deepdives into areas of philosophy and AI with the University of Oxford, Liberty Fund, Edge Esmerelda, St John’s College, and Aspen Institute now completed.

This month, we’ve been open-sourcing more educational content. To go with our Oxford Philosophy x AI syllabus (now with linked round ups!), and Liberty Fund AI and Human Autonomy Reading List, we’ve published:

Takeaways on “Will AI Bring a New Enlightenment or Digital Despotism” – featuring reflections from Ashley Kim’s time in Aspen.

A panel discussion on AI and the Great Books – five top takeaways and video recording from St John’s College.

We’re planning more events for later this year. Two we can currently share:

On September 24th Brendan will be giving the Adam Smith Lecture at Smith’s Panmure House in Edinburgh. His talk is titled, “The Artificial ‘Impartial Spectator’: Adam Smith, AI, and the Corruption of Moral Sentiments.” We’re running a (<300 word) essay competition for two people to win travel and accommodation to attend the lecture and a VIP dinner. Apply here.

In December we’re hosting with FIRE an AI x Free Speech Symposium in bringing together top AI researchers, policy experts, and select grantees in Austin Texas.

If you’re interested in future seminars and events with Cosmos, sign up below!

✨ Fresh Ideas from the Cosmos Ecosystem

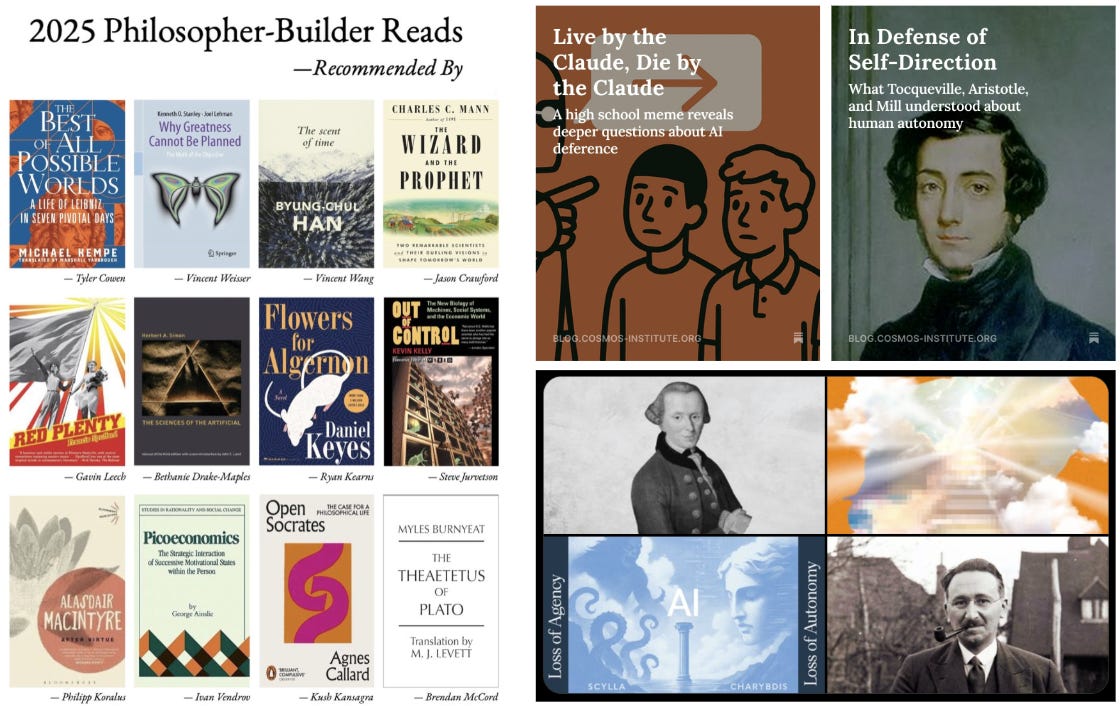

Live by the Claude, Die by the Claude: The case for AI deference, and why it’s flawed

Philosopher-Builder Summer Reads: 12 recommendations from top thinkers and builders in the Cosmos network

In Defense of Self-Direction: The philosophical roots of human autonomy through Aristotle, Humboldt, Mill and Tocqueville

AI for Science and Security essays in IFP’s Launch Sequence with contributions from Evan Miyazono, Charles Yang, and Séb Krier

🔬 Research

Mapping the open-source ML ecosystem (and accompanying thread) from recent grantees Hamidah Oderinwale and Ben Laufer

LLMs as models for analogical reasoning from former grantee Raphaël Millière

AI-AI bias: LLMs favour communications generated by LLMs from former grantee Peli Grietzer

Agent-permissions.json (a protocol for rule-based AI agent interactions with websites) from Samuele Marro

Overview of progress in AI and technical AI risk from fellow Gavin Leech

📚 What We’re Reading

Writing is Thinking | Nature

Joe Liemandt: Class Dismissed | Colossus

Underwriting Superintelligence | Kvist et al.

A Treatise on AI Chatbots Undermining the Enlightenment | Maggie Appleton

The Whale and the Reactor | Langdon Winner

Tech for Thinking | Sarah Constantin

On Optimism for Interpretability | Eric Ho

What has a Foundation Model Found?| Vafa et al.

AI is Capturing Interiority | Daniel Barcay

The Leopard | Giuseppe Tomasi di Lampedusa

🤝 Get Involved & Support Our Mission

Get involved:

Apply for a $1k-10k+ fast grant to build AI prototypes for human flourishing. Apply→

Indicate interest in our fellowship with Oxford’s HAI lab and/or partner institutions. Express interest→

Join a seminar like ones we’ve run with Oxford, St John’s, and Liberty Fund. Sign up for updates→

If you’re world-class and aligned with our mission. Pitch us a role→

Support our mission:

It's time to (philosophically) build,

Brendan McCord

Founder and Chair, Cosmos Institute

For readers joining us this month:

Cosmos Institute is the Academy for Philosopher-Builders. Our mission is to ensure AI becomes civilization's greatest force for human flourishing by expanding truth-seeking, preserving autonomy, and enabling decentralized progress.

I’ve been going through ALL the projects Cosmos grant awardees are working on and, they sound amazing!

I’ve always been super into the idea of improving truthfulness and cutting down on biased misinformation (even built a startup around it ~6–8 years ago that hit 100 K + users)

Now with LLMs, I’m giving it another shot. Got a few projects cooking, and the first one (which improves reasoning in LLMs by adding an explicit Bayesian reasoner) is live - https://argmaxtruth.factgpt.ai