A Letter, Year End 2025

On AI, human autonomy, and keeping the flame of freedom alive

Nothing in history has given us more leverage. Nothing has made it easier to stop thinking for ourselves.

This year I watched my four- and six-year-old flourish at Alpha School, where AI personalizes their learning and frees time for what machines cannot supply: curiosity, character, play. I saw AI systems produce new mathematics, reshape creative work, and hand ordinary people powers that once required armies of engineers.

And yet I’ve noticed something in myself I don’t much like. The readiness to accept an AI’s first draft of an email, a plan, a decision, because it’s faster, smoother, and good enough. An invisible waltz in which I take one step and then forget that I am no longer leading.

In many domains I’ve welcomed the flow and eagerly entered the new paradigm. In others I’ve pushed back: the forming of beliefs, the making of judgments, the hard labor of learning to think at all. For the deepest questions, what it means to live well, I’ve tried to refuse it entirely.

The more I talked with others this year, the more I understood this was not my private neurosis. Something is shifting. People feel it, even when they cannot name it.

At our seminars with Oxford, Aspen, Liberty Fund, St. John’s College, DeepMind, Palantir, and Microsoft, and at our AI for Truth-seeking Symposium with FIRE, a pattern declared itself: the people most anxious about preserving human judgment are often those building the systems. They see how capable these tools are becoming, and they are asking questions the rest of us would prefer to postpone.

At Anthropic’s headquarters in San Francisco, looking out over the city, co-founder Jack Clark told me he journals more than ever, writing down his thinking before consulting any AI. He knows the outputs are better with the machine. What he wants to know is whether he is still developing. This is the difference between effectiveness and judgment.

Technology that expands what is possible may narrow who is possible. But it need not.

Adam Smith understood both halves of this. You become a good thinker by doing the thinking, badly at first, then less badly. The practice is constitutive. This is the best antidote I know against becoming a Claude Boy. And the kind of people who develop through their own judgment are precisely the kind we need if we are to sustain a free society.

Twenty-five hundred years ago, Pericles stood before the mothers of Athenian dead and described what their sons died for: a city where citizens cultivate beauty and wisdom, debate openly before acting, and take responsibility for public life. A free society.

Since then, the principles of free societies have been transmitted through debates, texts, and institutions. We are entering an era where they must be embodied in code—or they will become inert. The philosophy-to-law pipelines of old have given way to philosophy-to-code.

Tocqueville saw the stakes: vigorous self-governance in which citizens grow through freedom or a softer servitude in which we gradually surrender it for comfort and convenience, becoming, in his phrase, “a flock of timid and industrious animals.” The choice is ours to make, while we still remember what it means to choose.

This is our civilizational moment. It requires people who can translate the principles of a free society into the actual systems and institutions being built.

The philosopher-builder

We named the philosopher-builder archetype in July: the kind of person who, like Benjamin Franklin, combines philosophical reflection with practical wisdom and builds institutions that embody their deepest convictions about human flourishing.

I expected this to resonate with a few hundred people. Instead, some of the most impressive founders I know sent notes saying they’d finally found language for what they were trying to do. Researchers said it changed how they thought about their careers. What I came to understand is that the division between “thinkers” and “doers” has impoverished both, and many people had been quietly waiting for permission to be whole.

The philosopher-builder is an answer to a transmission problem. Whereas the great reformers of old wrote pamphlets, today’s are writing code. They will give the principles of a free society technological expression: what autonomy looks like in API design, what decentralization means for architecture choices, what truth-seeking requires in model development.

This year Ivan Vendrov, Zoe Weinberg, Séb Krier, Jason Zhao, Alex Komoroske, Lisa Wehden, and Joel Lehman all spoke about their work through this lens. We published Summer and Winter reading lists drawing on recommendations from across our network. And we started to see a community coalesce around the conviction that philosophy and building aren’t separate activities—they’re the same activity, done with different hands.

What we built this year

Fourteen seminars, including with Oxford, Aspen Institute, Liberty Fund, St. John’s College, and Edge, bringing builders into conversation with Hayek and Polanyi on the use of knowledge in society, with Socrates and Mill on truth-seeking, with Smith on moral development.

On YouTube and other video channels, our interviews with 11 AI experts and entrepreneurs on their philosophical influences reached over a million subscribers. On Substack, our long-form essays on AI and philosophy reached over 17,000 readers.

And voices across our network produced pieces we keep returning to: Tyler Cowen on how AI will change what it is to be human, Jason Crawford’s Techno-Humanist Manifesto, Caitlin Morris on social tinkering, Gavin Leech’s “The Scaling Era” with Dwarkesh Patel, and Jack Clark’s “Silent Sirens, Flashing For Us All.”

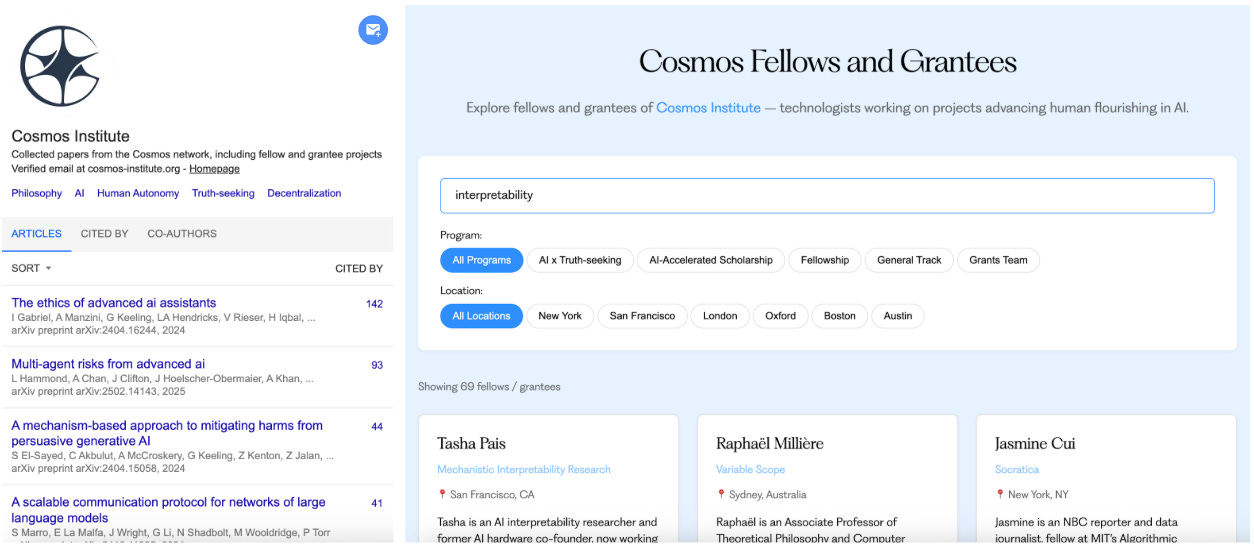

We grew our fast grants program to 140 builders and researchers. Through initiatives including a $1M AI for Truth-seeking program, many projects like Campus, Szdat, Kanonic, and Authorship began to take shape.

We supported 16 fellows working at the intersection of philosophical depth and frontier AI, many of whom did so at Oxford HAI Lab. This led to 34 research papers, from “The Philosophic Turn for AI Agents” to “Full Stack AI Alignment” to “Martingale Scores.”

Through our incubation fellowship, Samuele Marro launched a new non-profit called the Institute for Decentralized AI. We backed 8 philosopher-builders to create new companies that take their ideas to world-changing scale. And together with IHS, we started funding AI tool use for scholars in philosophy and the humanities: researchers like Kevin Vallier and Seth Lazar integrating these tools into serious intellectual work.

What matters more than numbers is the community that emerged: people who share a conviction that the principles of a free society must be translated into the systems we’re building, and that the window for doing so is shorter than most people realize.

What I don’t know

The practices I’ve developed personally feel almost monastic: blank-page journaling before consulting AI, deliberately choosing the harder cognitive path, regular reading groups. Preserving the independence, force, and originality that remains to us seems to require a level of intentionality we often tell ourselves we don't have time for.

I believe the classical liberal tradition has resources for this moment. But translation is hard: principles like truth-seeking and autonomy don't map easily onto architecture choices and agent interfaces. And even when the direction is clear, building well requires judgment that can only come from practice. The ideas and the formation have to come together.

What I’ve come to believe is that this practical wisdom has to be developed in community: through seminars, collaborations, and shared building. And probably through intensive multi-month human formation, which will require new thinking.

The philosopher-builder isn’t a solitary figure. The archetype only works if there are institutions that cultivate it. That’s what we’re trying to build.

Looking forward

This year, Harry Law and I are writing a book on what it takes to be a free agent in the AI age. I’m going to work closely with Philipp Koralus on research at Oxford’s Human-Centered AI Lab, and deepen connections with collaborators at the top labs as things move fast.

We are bringing this community together in person. More gatherings, more informal meetups, especially in Austin. And we’re training the next generation of philosopher-builders through a new format we’ll announce soon.

We’re hiring and will share roles soon. If our mission resonates, get in touch.

The question I keep returning to is simple: What kind of people do we want to become in a world where thinking is optional? The answer will determine what we build.

The flame of a free society has been passed from debate to debate, text to text, institution to institution for 2,500 years. Now it needs to live in code.

To the donors, fellows, collaborators, and readers who made this year possible:

Thank you.

Brendan

Cosmos Institute is the Academy for Philosopher-Builders, technologists building AI for human flourishing. We run fellowships, fund fast prototypes, and host seminars with institutions like Oxford, Aspen Institute, and Liberty Fund.

Looking forward to what Cosmos will do in the year ahead. The philosopher-builder community is growing, in a time when we need it most.

I've used Claude a lot over the past year, and noticed myself trusting my own decisions less. This concept and the blank page journaling exercise of "What do I (emphasis on the "I") think??" before interacting with AI have been what I'm coming around to this year >>"You become a good thinker by doing the thinking, badly at first, then less badly. The practice is constitutive."<<

I attended an AI conference for business leaders and it was astonishing how much judgment, thoughtfulness, and decision-making they're handing over to AI. And how much they feel like they're optimizing their lives. The human experience wasn't designed to be optimized!