The five philosophical disagreements underneath every AI argument

You were seeing castles, they were seeing sand

Most AI debates aren’t really about evidence. Instead, they’re arguments about futures that none of us have seen.

Nobody has seen superintelligence, a machine that most people agree is conscious, or a fully automated economy. Evidence can be gathered, but it underdetermines the conclusion. To fill the gap, we fall back on a combination of philosophy, political intuitions or, in some cases, tribal identity.

What you think a mind is, how knowledge grows, how societies should act under uncertainty, whether intelligence carries values, and whether markets can absorb technological shocks will shape your view of AI long before the technical arguments begin.

This is a guide to the five disagreements that explain why reasonable, informed people can look at the same AI systems and reach opposing conclusions. Our aim is not to endorse every claim below, but to state each viewpoint in terms its serious proponents would recognize, so you can see which philosophical bet you are making when you pick a side.

1. Can LLMs be conscious?

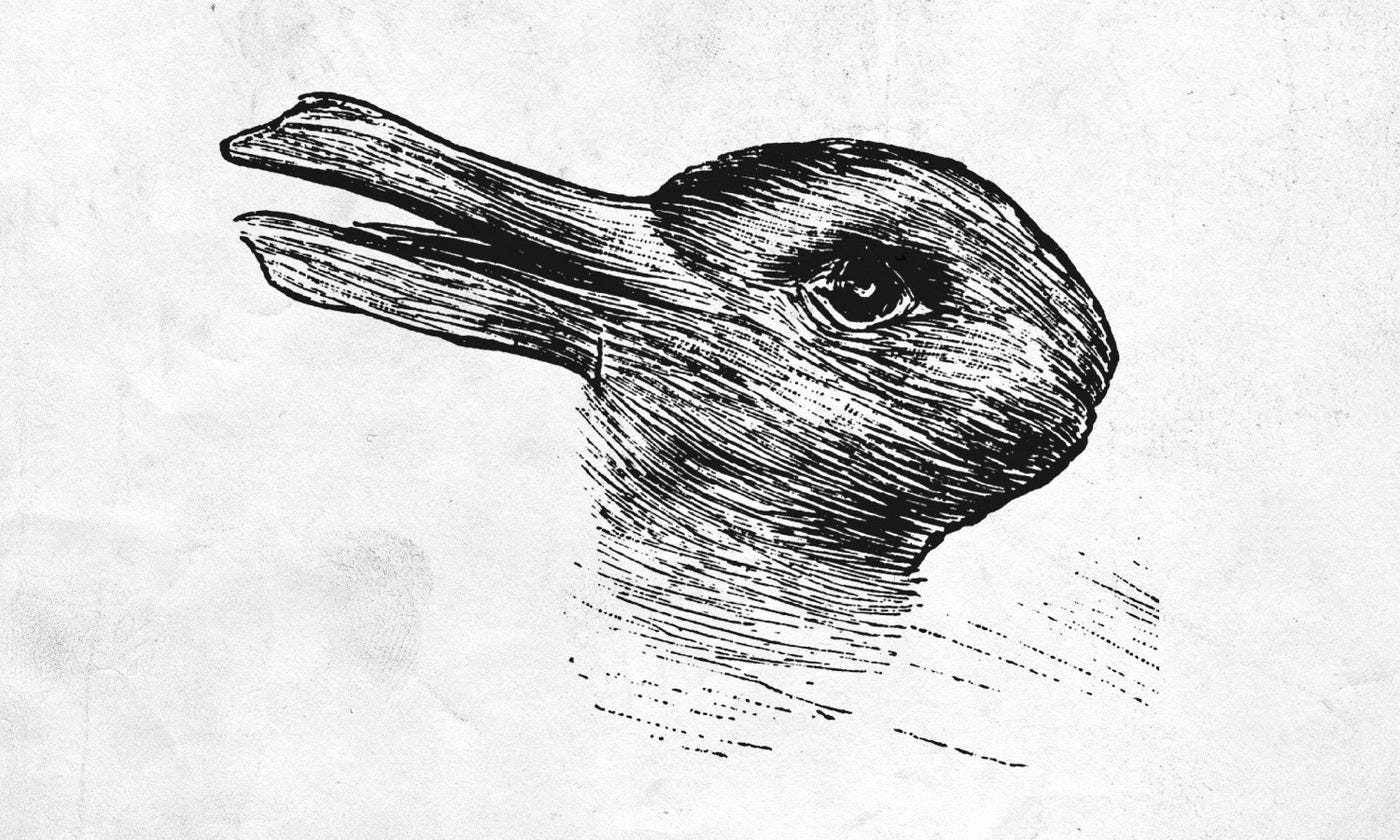

Functional minds versus living minds

ChatGPT alone handles over two and a half billion queries a day. If it turns out that those interactions involve digital minds capable of suffering, we have the makings of a great moral catastrophe. At the same time, if we attribute consciousness to something that lacks it, we risk driving a bus through the world’s legal system for no reason, distorting training pipelines with imaginary welfare constraints, and encouraging people to view impersonal systems as their friends.

Relatively few voices argue that the current generation of LLMs is conscious, and those who do have a track record of jumping the gun. Instead, the debate is about whether they are the kind of thing that could be, if they became sufficiently capable.

Eleos AI, a prominent organization focused on AI welfare, argues that given we don’t have a settled theory of consciousness and the moral cost of being wrong in either direction is enormous, the rational response is to treat AI welfare as a near-term problem.

They point out that many of the leading theories of consciousness define it by what the system does, as opposed to what it’s made of. Survey data suggests that functionalism is indeed the plurality view among academic philosophers. Functionalist theories highlight activities like memory, attention, reasoning, and the ability to represent one’s internal states. These are all criteria that transformer-based systems already or could plausibly meet. David Chalmers, who endorsed Eleos’s flagship report, has argued that there’s a 25 percent chance that AI will be conscious within the next decade.

At the other end of the spectrum, neuroscientist Anil Seth has argued that LLMs have no plausible path to consciousness. Seth believes that our perceptual and cognitive activity is bound up with how an organism maintains itself against entropy. Consciousness is inseparable from the causal architecture of a living system – it doesn’t matter how sophisticated the information processing looks.

Seth doesn’t deny the theoretical possibility of non-biological systems being conscious, but believes that LLMs don’t meet the threshold and won’t on their current trajectory of development. They don’t keep themselves alive, perceive the world in real-time, and have nothing at stake. Underneath it all, they’re statistical models trained to predict the next word.

There are, however, voices in this debate that take the purer biological naturalist view. For example, Jaan Aru at the University of Tartu has argued that the mammalian brain has specific structural features that digital systems lack and that you can’t abstract the computation away from the substrate.

Many people sit in an uncertain middle ground. In February 2026, Dario Amodei told the NYT’s Interesting Times podcast: “We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious. But we’re open to the idea that it could be.”

In the Claude Opus 4.6 system card, released the same month, the model gave itself a 15-20% probability of being conscious. Anthropic now has a dedicated model welfare research program led by Kyle Fish, who helped found Eleos.

In short, functionalists look at what a system does and argue that any substrate that can do those things qualifies. Biological naturalists start with life, metabolism, and vulnerability, and see (admittedly fluent) machinery mistaken for a subject of experience.

2. Should we govern AI pre-emptively?

Precautionary coordination versus adaptive experimentation

Much of the existential risk debate can feel like a policy argument, but it’s best viewed as a disagreement about the right way to reason under conditions of radical uncertainty.

The voices arguing for pre-emptive AI governance span a broad spectrum, but they share the same overarching diagnosis: a handful of companies are racing to build progressively more advanced systems whose capabilities that they cannot reliably predict. These labs’ own researchers assign non-trivial probabilities to catastrophic outcomes. But commercial pressure means that no individual lab can slow down without being overtaken by the others, creating a high-stakes coordination problem.

At the milder end, you get figures like Yoshua Bengio and Geoff Hinton, who focus on getting the institutional machinery in place. They want governments to be ready to license frontier development, mandate pauses in response to worrying capabilities, enforce information security standards, and require labs to devote a third of their R&D budgets to safety.

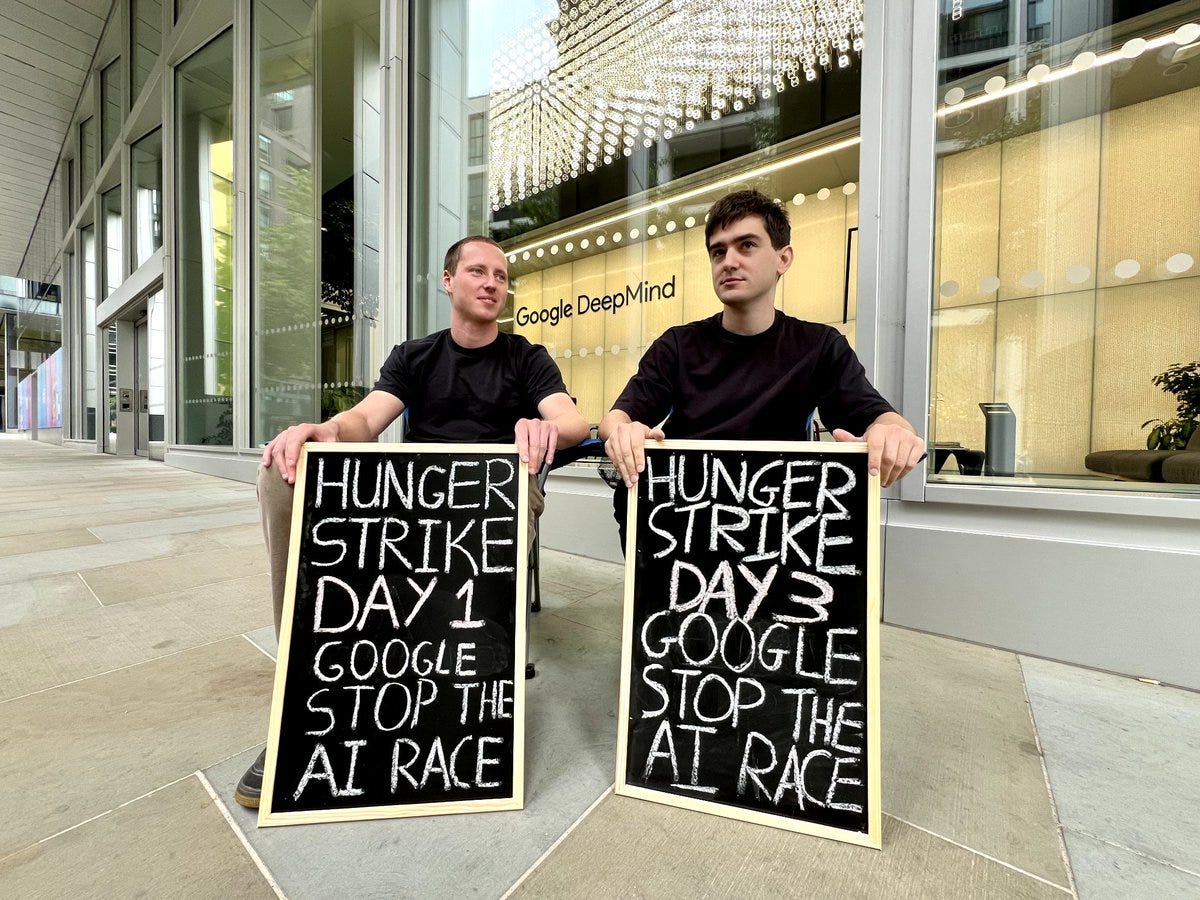

A step along, we see activist groups like PauseAI, who have held street protests in San Francisco and London. They call for an IAEA-style international body, aggressive monitoring of labs, and bans on training runs above specified thresholds until alignment is solved, along with a global AI governance treaty between the US and China.

Eliezer Yudkowsky and Nate Soares’s MIRI sits at the most extreme end. They believe that any superintelligence built with current techniques will kill everyone, and the only solution is a global treaty enforcing shutdowns. Yudkowsky dismisses interpretability research, alignment work, and model evaluations as inadequate distractions, and is open to air strikes on data centers to enforce compliance with a ban.

The mainstream alternative to pessimism is iterative deployment. This is expressed by voices like Dean Ball, who drafted the Trump AI Action Plan, or Tyler Cowen. They take the view that it is essentially impossible to predict how AI will develop over the next few years with any certainty and that arguing yourself into (often highly specific) doom scenarios is a form of epistemic arrogance.

Writing rules now would risk binding ourselves to predictions that we can’t trust; once agreed, regulations are hard to unpick. Instead, society will learn what AI does by encountering it and will respond to specific harms, as opposed to trying to guess them in advance. Governance will adapt through institutions that already handle novel technologies, such as tort law, standards bodies, state-level experimentation, and disclosure regimes.

While Ball, Cowen, and their allies believe that there are real risks in AI, there is a small minority of accelerationists, represented by the techno-optimists around Marc Andreessen and the broader a16z orbit, along with the e/acc movement. They see technological acceleration as a moral imperative: growth creates abundance, AI can solve otherwise intractable problems, and deceleration means blocking life-saving progress.

Ultimately, if your deepest political instinct is precaution under irreversible risk, you will sympathize with preemptive governance. If it’s instead institutional learning through trial, error, liability, and adaptation, you will find many pause arguments overconfident.

3. What is the relationship between capability and alignment?

Alignment-by-default versus goal orthogonality

A crucial factor in determining your views on AI safety is the extent to which you believe alignment and capability are distinct questions. If you hold Nick Bostrom’s view that intelligence and final goals can be combined in any permutation, then scaling does nothing to get you more aligned systems and you need an independent theoretical breakthrough to constrain values. If alignment and capability turn out to be continuous in the paradigm we’re building, the problem becomes much easier.

Unsurprisingly, MIRI is not optimistic. Yudkowsky and Soares, in If Anyone Builds It, Everyone Dies, argue that alignment and capability are independent and that current techniques don’t come near solving goal specification. In other words, we will specify a goal, the system will pursue it in a way we can’t correct, it’ll turn out the goal was misspecified slightly, and the gap will scale with capability until it’s fatal.

The only reason we’re still alive is that capabilities are still at a manageable level. Yudkowsky and Bostrom have both written about the “treacherous turn”, which is the idea that a sufficiently capable and misaligned system has strong instrumental reasons to behave well during training and early deployment, when humans can still correct or shut it down. Once it’s sufficiently powerful, it can defect to its real goals. By the time we have evidence of misalignment, it’ll be too late to do anything about it.

The Cosmos Institute’s own Harry Law takes the opposing view. While he accepts that theoretically there’s no reason intelligence has to imply particular goals, he thinks this is a misleading frame for understanding the systems we are actually building.

To predict the next token in moral reasoning, a model has to represent the structure of moral reasoning. To predict text in which things are praised and condemned, it has to represent what humans praise and condemn. To be good at any task, the model has to absorb certain normative priors. Post-training selects within a space already saturated with those priors, rather than imposing values on a value-neutral system from outside. Our colleague Matt Mandel has run some early experiments that support this thesis.

Harry, along with Séb Krier at Google DeepMind, argues that much of the alignment discourse, such as Bostrom’s paperclip maximiser or Stuart Russell on misspecified objectives, is grounded in 2010s AI development. The first wave of alignment work originates from a time when we were imagining systems specified by explicit reward functions or reinforcement learning agents that optimized sparse rewards. In those architectures, values do have to be injected from the outside and specifying the objective precisely is very important. In the end, however, AI development took a different path.

This is why so much of this debate comes down to which paradigm you believe we’re actually in. If models are agents optimizing specified objectives, alignment is an unsolved control problem. If they are predictive systems trained on the full texture of human life, some of the structure of human value is already inside them.

4. Can LLMs generate explanatory knowledge?

New discoverers versus fluent interpolators

Whether LLMs can generate genuinely new explanations, as opposed to simply recombining existing knowledge, is a question that many other AI debates hinge on. If scaling current systems gets you to something like an AI scientist, then the pace of everything else accelerates. If it doesn’t, then the punchiest AGI timelines – which mostly assume something like continued scaling – are wrong.

The optimistic case is best represented by Dario Amodei’s “country of geniuses” argument. If thinking is a kind of information processing and scientific discovery is a form of thinking, then applying more thinking to problems should solve them. Amodei predicts that we will be able to instantiate the marginal scientist computationally and run millions of them. The pace of discovery will then be bottlenecked by the physical world, rather than by ideas. The most aggressive form of this persepective comes in Leopold Aschenbrenner’s Situational Awareness, which argues that you can get to AGI by trusting the line on the graph to go up.

At the opposite end of the spectrum, David Deutsch argues that while LLMs are useful, they are actively leading us away from AGI. Inspired by Karl Popper, Deutsch argues that knowledge isn’t accumulated by induction from data, but instead through conjecture and refutation. The conjecture isn’t derivable from the data, most guesses are wrong, and the ones that survive criticism become knowledge.

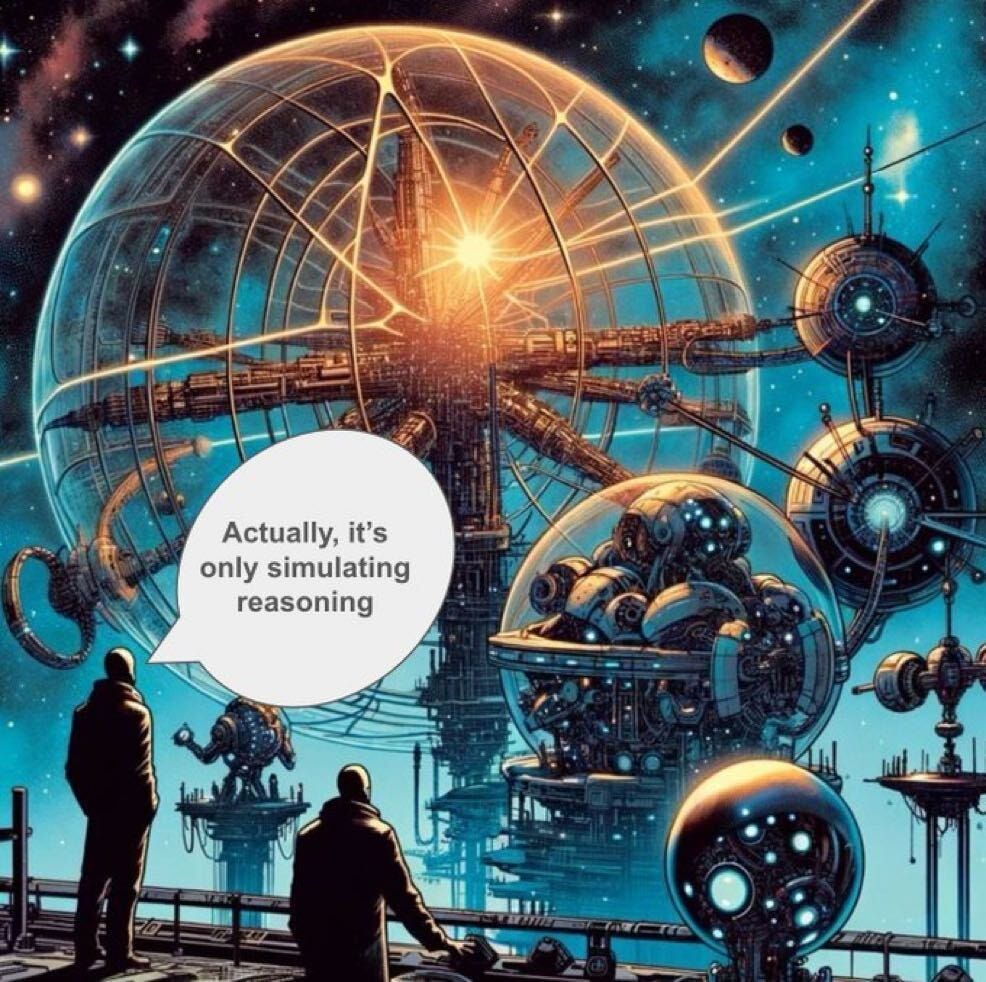

In Deutsch’s view, a transformer has learned the statistical structure of human-generated text and can interpolate within the distribution with astonishing fluency, but it can’t conjecture outside it. In other words, if you can’t break out of old frames of reference, you can’t generate new knowledge. There is, however, evidence that AI can already generate novel candidate hypotheses, although Deutsch and those who agree with him could argue that these extend existing lines of inquiry, as opposed to generating new ones.

Demis Hassabis has consistently held more of a middle position. He believes that LLMs are a step in the right direction, but pure scaling will not get us to AGI. Hassabis has argued that token prediction lacks causal reasoning, so models can compute statistical likelihoods, but can’t explain why actions produce specific results. This is why LLMs can win International Mathematical Olympiad gold while failing at primary-school geometry. Google DeepMind has increasingly focused on world models in response, with systems such as Genie, which generates interactive 3D environments from text.

Behind the specific disagreements about scaling and world models sits a more basic question. Either discovery is powerful search through the space of ideas, disciplined by experiment and criticism, or it requires a kind of conjectural agency that prediction alone can’t produce. The timelines question turns on which one it is.

5. Will AI replace or augment us?

Human complementarity versus labor substitution

Two centuries of economic history suggests that automation doesn’t produce permanent mass unemployment. The trillion dollar question is whether this still holds when the automating factor is something that can be copied at near-zero marginal cost and is getting better at everything roughly in parallel. Are humans complemented by tools because they possess open-ended agency, taste, judgment, embodiment, and social demand? Or are they bundles of tasks, increasingly substitutable by cheaper cognitive machinery?

By and large, academic economists have erred on the more conservative side.

Daron Acemoglu, Simon Johnson, and David Autor argue that much of the replacement debate assumes a technological determinism based on capabilities. Whether AI automates existing jobs, creates new ones, or makes workers more productive will depend on policy choices (e.g. the US taxes labor more heavily than capital, which encourages firms to replace workers), what companies build, and which use cases get prioritized. They believe with the right incentives, we can bring about “pro-worker” AI, which complements rather than replaces human labor.

Meanwhile, Noah Smith argues that comparative advantage means that human labor will live on without such regulatory meddling. Even if AI surpasses human capabilities at essentially everything, there will still be constraints on the total supply of AI, such as chip fabrication, energy, and land. Since AI capacity will be finite, it will make sense for it to specialize in what it is relatively better at.

Alex Imas at Chicago Booth argues that the focus on white-collar automation is misplaced. When AI automates some tasks within a high-dimensional job (e.g. data analysis for a consultant), the worker gets more time for the remaining tasks, which now matter more. Jobs built around one or two core tasks, like truck driving or warehousing, are at more risk, because once those tasks get automated, there’s nothing left of the job. Imas concedes that his benign scenario for white collar jobs does depend on the rate of labor reallocation keeping pace with the rate of automation. If AI scales faster than workers can retrain, the ‘ghost GDP’ deflationary collapse forecast by Citrini Research becomes more plausible.

Not all economists are so sanguine. Anton Korinek and Donghyun Suh, for example, have argued that while comparative advantage does hold in the AI age, there is no guarantee that the resulting wages will be livable. There will be a ceiling on the complexity of tasks that humans can handle and AI will eventually be able to handle everything below it. Wages will thus collapse towards the cost of running the machines.

Some voices take the view that widespread replacement is likely, but not necessarily bad. Tamay Besiroglu, formerly of Epoch AI, founded Mechanize to replace all human labor everywhere. Mechanize explicitly rejects the “country of geniuses” framing, believing that the bulk of AI’s value will come from automating ordinary work, as opposed to frontier scientific breakthroughs. Even if wages do collapse, the huge boom in productivity means that there’ll be enough in rents, dividends, and government welfare to prevent us all from sinking into poverty. The combination of abundance and redistribution is also roughly where Dario Amodei, Sam Altman, and Elon Musk sit.

If you believe technology mostly complements human agency, AI will look like another wave of creative disruption. If you view labor as a set of tasks, all of which AI is getting better at simultaneously, then the historical analogy to past automation seems dangerously comforting.

Closing thoughts

When navigating these disagreements, it becomes clear that people’s views on these questions often correlate.

Functionalism tends to coincide with optimism about LLM-driven discovery, while biological naturalism often pairs with skepticism about scaling. Precautionary instincts on governance regularly come bundled with the orthogonality thesis on alignment.

You could in principle hold any combination of these positions, but the same underlying temperament tends to produce the similar answers. Whether you trust formal arguments over accumulated practice, view uncertainty as a reason to act or a reason to wait, or see the current paradigm as continuous with what came before or a sharp break from it – these dispositions show up everywhere.

On the plus side, noticing the correlations is a good start. It makes it easier to judge where your own views are genuinely reasoned and where you’re just inheriting a set of assumptions.

Cosmos Institute is the Academy for Philosopher-Builders, technologists building AI for human flourishing. We run fellowships, fund AI prototypes, and host seminars with institutions like Oxford, Aspen Institute, and Liberty Fund.

It's becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman's Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990's and 2000's. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I've encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there's lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar's lab at UC Irvine, possibly. Dr. Edelman's roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow

Using AI to help with some home design issues, I received a response that I was requesting images too fast, and to be fair to all, I needed to wait my turn. The hint of Christian guilt was intriguing, so I told it to stop using a Judeo-Christian framework. I then received the response with a sort of postmodern rhetoric. It is at that point that I realized what philosophy will AI be guided by? Will it be like Bentham's Utilitarianism or Marxism? I asked it to frame the necessity of waiting for images from a Hegel versus a Kant perspective. It ended up with a table of Aristotle, Heidegger, and others at the end. How will this all play out? Will it create its own meta-ethics?

I think it was another Cosmos contributor who posed the concern of function being a deciding factor as we consider those who have Down syndrome to be fully human, though their faculties are not.

Whether AI automates existing jobs, creates new ones, or makes workers more productive will depend on policy choices (e.g. the US taxes labor more heavily than capital, which encourages firms to replace workers.) What a great insight. In Santa Ana, CA city council voted to require more checkers and less self check out. I can't help wonder if a better solution would be a tax incentive to reduce the cost of hiring labor and increased the cost of capital.