Science Needs Scientists

And Scientists Need Science

Today’s essay is a guest post by Iulia Georgescu, a physicist and independent scholar researching the history of computational physics.

“Artificial Intelligence will revolutionize science.” The phrase is usually invoked by researchers when discussing the promise of thinking machines to help us understand the natural world. In the early 2020s, I subscribed to, and was perhaps even evangelizing for, the role of AI in scientific research. Like many of my colleagues, I was gripped by a new tool that promised so much.

More recently my view has changed. I still believe that AI will be fantastically useful, but not necessarily in the way we think. Discovery, after all, is not the same as understanding. As scientists, we need to engage with the process of inquiry to truly make sense of what we learn about the world. Only then can we understand.

The Long History of “AI for Science”

In the summer of 1953, physicists Enrico Fermi, John Pasta, and mathematicians Stanislaw Ulam and Mary Tsingou ran the first “numerical experiment” on MANIAC, one of the early electronic computers built at the Los Alamos National Laboratory after World War II. Computers were new and scientists were excited to explore their use in solving research problems like simulating the dynamics of atoms and molecules, studying tumor cell populations and investigating numerically fluid dynamics. They even created the first documented chess-playing program that defeated a human in the game.

This group decided to model a chain of oscillators (identical masses connected by springs), add a small nonlinearity (a quadratic or cubic term), and see what would happen. The expectation was that the system would reach equilibrium (common sense would predict that there is some wiggling around, but ultimately energy ends up equally distributed throughout the springs). But the results were surprising. Instead, the system showed a recurrent behavior where the energy spreads throughout the chain, then comes back, before spreading out again. This observation sparked interest in the study of nonlinear systems that would later lead, among other things, to the discovery of solitons (waves that travel freely preserving their shape) and the development of chaos theory in the 1960s-1970s.

If one was to assign a birthyear to computational physics, that honorific should go to 1953 where Fermi and colleagues made for the first time an unexpected discovery through a purely computational approach. Computers had been used for physics and astronomy calculations before – for example, in the 1930s mechanical IBM accounting machines were modified to solve the differential equations of planetary motion by numerical integration – and had played a key role in the development of the atomic bomb during the Manhattan project. In the following decades computers would transform scientific research. Today, there are almost no advances in physics that are not enabled by some aspect of computer simulation.

Three years after the “first numerical experiment” the term artificial intelligence was coined and a first attempt was made to use automated reasoning to prove mathematical theorems through the Logic Theorist system in 1956. The first AI “expert” system, DENDRAL, was created in 1965. Combining a knowledge base with a reasoning engine, it was capable of determining the molecular structure of a compound from its mass spectra. Here, “expert” refers to a kind of AI system that encoded domain knowledge from human experts as rules and applied them through an inference engine.

Other examples include LHASA, a program designed in 1972 to discover sequences of reactions to synthesize a molecule; and the Automated Mathematician in 1977, a heuristic-based program designed to discover new mathematical concepts and theorems; and other expert systems like MYCIN (to identify bacteria) and PROSPECTOR (for mineral analysis). In the late 1980s and early 1990s, artificial neural networks were used to tackle problems in particle physics, astrophysics, and –– in a glimpse of what was to come –– predicting protein structure. By the 2000s, AI methods were in use in many areas of physics and even assisted data analysis leading to the discovery of the Higgs boson particle.

In the 2010s and 2020s, scientists began to use a wave of powerful neural network-based tools to solve research problems. Some of these advances, such as Google DeepMind’s AlphaFold protein prediction system, made headlines around the world. Others were important but less glamorous. Machine-learned interatomic potentials, for example, significantly speed up materials and chemistry simulations yet remain unknown outside the research community.

But if “AI for science” has a long history, why has it only recently started to make the news? In his 2003 book, Douglas S. Robertson coined the term “phase change” to describe a radical change that an instrument makes possible compared to the prior state of the art. AI may be a phase change in how we do science, but not only because of powerful individual tools. It is their extreme accessibility, from large language models to the AlphaFold Protein Structure Database, that sees advances in one field become instruments of exploration in others.

Epistemic Enhancers

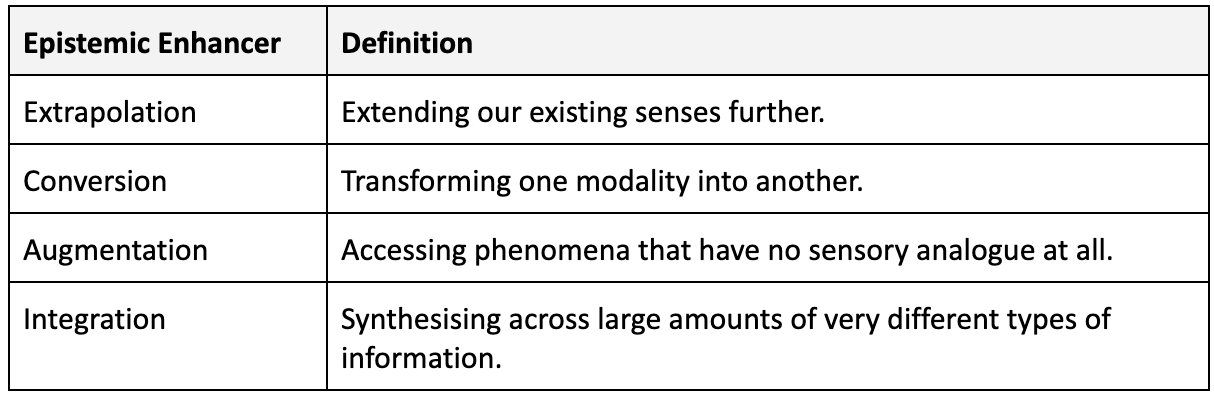

Scientific instruments provide extensions to humans’ senses. Telescopes and microscopes allowed us to see the far and the small. This type of enhancement is known as extrapolation. Another type of epistemic support is conversion, that is, transforming one modality into another (like sound into a visual image). Finally, we have those instruments that extend our capacities by giving access to phenomena that elude our senses, such as detecting radiation or magnetic fields. This is known as augmentation. These three moves – extrapolation, conversion, and augmentation – are all types of epistemic enhancers.

A central motivation behind scientific practice is understanding. But what is scientific understanding? The 2009 book Scientific Understanding edited by Henk W. de Regt, Sabina Leonelli, Kai Eigner suggests that there is no universal definition of scientific understanding. Understanding in physics differs from understanding in biology or engineering. Even within my own discipline, physics, what we mean by understanding is not straightforward. While clearly related to the explanation of a phenomenon, understanding is not precisely the same thing. There is a difference between understanding the phenomena and understanding the theories or models that explain that phenomenon.

A prerequisite for understanding is discovery insofar as one cannot understand a phenomenon that has not been observed. Discovery is the process or product of successful scientific inquiry. Objects of discovery are things, events, processes, causes, and properties as well as theories and hypotheses and their features. The 1987 book Scientific Discovery proposed that discovery in science can be broken down into problem solving tasks and therefore can be automated with computers.

The authors of the book built four types of programs to look for quantitative or qualitative laws and structural models. Then they used them individually and combined them to rediscover many laws in physics and chemistry. While the authors recognized that there is no unique process that accounts for scientific discovery, they showed that most of these can be cast as problem solving tasks that can be tackled with heuristics. Their method, based on tree searches, was general in theory but limited in practice. This was because the combinatorial explosion of possible paths made them intractable for problems beyond a modest scale.

Both traditional numerical methods and modern AI tools enhance epistemic extrapolation and conversion. The application of such methods represents a difference in kind as well as magnitude for scientific practice. As Paul Humphreys put it: “This extrapolation of our computational abilities takes us to a region where the quantitatively different becomes the qualitatively different.” This is because these simulations cannot be carried out in practice except in regions of computational speed far beyond the capacities of humans. This dual articulation of the quantitative and the qualitative could be taken as an argument for why modern AI tools ought to usher in a new way of doing science.

In the 1960s mathematicians Bryan John Birch and Peter Swinnerton-Dyer used the EDSAC-2 computer at the University of Cambridge Computer Laboratory to run numerical calculations that allowed them to state a conjecture about the set of rational solutions to equations defining an elliptic curve. This is now known as Birch and Swinnerton-Dyer (BSD) conjecture and is one of the seven Millennium Prize Problems. The computer helped them explore an abstract space so they could formulate the conjecture. This computer-assisted discovery falls in the final category of epistemic enhancers mentioned above: augmentation.

If the formulation of the BSD conjecture is a weaker example of augmentation, more recently AI methods helped mathematicians make a breakthrough towards solving it. Using machine learning, mathematicians discovered unexpected oscillatory patterns in the parameter space key to BSD (of hundreds of dimensions) which led to the “murmuration” conjectures. Progress has also been made towards solving another Millennium Prize Problem as AI tools were used to find potential singularities in the Navier-Stokes equations.

Although automated theorem proving programs have been around for 80 years, these examples do suggest that we are witnessing, in Robertson’s terms, a phase change. The quantitatively different becomes the qualitatively different in a more interesting way. It’s speed, yes, but it’s also breadth. Take AlphaFold and the AlphaFold Protein Structure Database it made possible. What makes it revolutionary is not only the improved accuracy of the protein structure prediction, but also its usability by scientists from many fields (who can access hundreds of millions of protein structure predictions).

We are yet to see the full extent of what AI tools can do for scientific research, but one differentiator between them and traditional scientific instruments, including computer simulation, is that they combine epistemic enhancers to explore vast dimensions of information. Remember DENDRAL, the early AI system determining the molecular structure of a compound from its mass spectra? Now, imagine the power of its modern incarnation which has access to several databases of molecular spectra, can cross-check with published scientific literature, and has the ability to write code to perform additional calculations if needed.

Or consider a powerful telescope that discovers a new exoplanet. Here an AI tool analyzes the spectra, determines the likely composition of the exoplanet atmosphere, compares it with the existing recorded information, runs simulations with the known and inferred parameters to better characterize the exoplanet and adds it to the catalog. All of these individual steps are already done separately and for each the use of AI tools can be seen as an evolutionary improvement. But the combination of these improvements, working together across different sources of information is potentially revolutionary.

If I’m permitted to add a new epistemic enhancer category for AI as an instrument the most appropriate would be integration (as in synthesizing across large amounts of very different types of information). When a tool synthesizes across hundreds of heterogeneous sources simultaneously, the inferential path from evidence to conclusion can become challenging for the scientist receiving the result to trace. The more dimensions integration spans, the wider this gap between discovery and reconstruction becomes. Where earlier epistemic enhancers extended what scientists could see or calculate, integration may increasingly determine what they conclude.

Our Role as Scientists

At the end of his book, Humphreys argued that computer simulation brings a “shift of emphasis in the scientific enterprise away from humans.” By the 1980s, researchers already knew that, at least in part, scientific discovery and problem generation could be successfully automated. Modern AI methods can do the same thing, only much faster and much better. I regularly hear from colleagues that in the coming years AI will take over many of the tasks associated with scientific inquiry.

Use of AI will likely mean more discoveries overall, but to discover is not to understand. Henk de Regt argued that: “scientists achieve understanding of phenomena by basing their explanations on intelligible theories. The intelligibility of theories is related to scientists’ abilities: theories are intelligible if scientists have the skills to use those theories in fruitful ways.” Quantum mechanics is an example of a very effective, yet not particularly intelligible theory, that despite the practical successes still eludes physicists in its interpretation. If AI tools can optimize discovery and generate explanations, they could perhaps also help produce more intelligible theories.

Yet intelligibility is not a property of theories themselves. As de Regt suggests, it depends on the capacities of the scientists who use them. A theory becomes intelligible only when scientists acquire the skills to explore its implications and apply it fruitfully. Even if machines make discoveries and generate explanations, this kind of understanding still depends on the participation of human investigators. Put differently: scientists need science just as science needs scientists.

Cosmos Institute is the Academy for Philosopher-Builders, technologists building AI for human flourishing. We run fellowships, fund fast prototypes, and host seminars with institutions like Oxford, Aspen Institute, and Liberty Fund.

Interesting recap to date. I wish the rhetorical logic at the end was a bit meatier. Why is it inherent that scientists be the one to infuse intelligibility

I agree with Cutler that if there be an additional layer of AI tools that apply the implications of these scientific discoveries in a fruitful manner, then these theories become intelligible right? What is inherent in human scientists that is absent from this extra layer of AI tools that focus specifically on applying scientific discoveries?