Brave New Nudge

“Liberal AI” and The Kindest Threat to Freedom

Imagine a system that knows you eat sensibly all day and fall apart at 10pm. That knows a calorie label won’t help you, because the evidence says calorie labels mostly change the behavior of people who already eat well.

This system knows the difference between your hunger and your boredom. It leaves you alone when you don’t need it. It preserves every option on the menu. It just rearranges the choice so you’re more likely to do what you’d do if you were thinking clearly.

Now extend this to every significant decision you make. Cars, insurance, investments, medical treatment, career moves. A system that corrects for your specific biases, knows what you’ll regret, and steers you toward what it calculates you really want. The full menu is technically available, but the system is right often enough that overriding it starts to feel like pride, and convenient enough that you stop wanting to.

Cass Sunstein—co-author of Nudge, former White House regulatory czar, the most influential regulatory thinker of his generation—calls this a “Choice Engine.” His arguments tend to become law. I think the Choice Engine’s greatest danger is that it works.

His new paper “Liberal AI,” prepared for a lecture at Oxford this May, makes the strongest case yet that AI-powered choice architecture can respect autonomy while improving welfare. The logic runs like this. Classical liberalism, following Mill and Hayek, grounds freedom of choice in an epistemic claim: the chooser knows best. AI disrupts that claim by knowing better. Better than the individual about her own informational gaps, her biases, what she’ll want next year. So Choice Engines can personalize the nudge, correct the bias, and never technically remove the freedom to choose otherwise. Liberal AI is no oxymoron.

Sunstein flags the risks. He talks about manipulation, self-interested designers, AI’s own biases. He calls for regulation. He distinguishes liberal Choice Engines from illiberal ones. But even the liberal version is steering people toward choices they wouldn’t have made on their own. Sunstein knows this is paternalism. He thinks preserving opt-out keeps it libertarian rather than something worse. I argue that it cannot.

Mill’s Music

Sunstein’s Mill is an information theorist. In On Liberty, Mill argues that each person is “the person most interested in his own well-being,” possessing “means of knowledge immeasurably surpassing those that can be possessed by anyone else.” When outsiders intervene, they rely on “general presumptions” that are likely wrong or misapplied.

On this reading, the case for freedom of choice is instrumental: people should choose for themselves because they know their own circumstances best. If the case for freedom rests on the chooser’s informational advantage, and AI erases that advantage, then the case for freedom weakens. AI knows your medical history better than you remember it, your spending patterns better than you track them, your recurring mistakes better than you admit them. The epistemic ground has shifted. So far, so honest.

But Sunstein himself notices something that should give him pause. He observes that “the words of On Liberty are epistemic, but the music is romantic.” Then he proceeds to work exclusively with the words.

The words say: people should choose freely because they know best. The music says something else entirely. It says that the process of choosing is what makes a person a person. Mill:

The human faculties of perception, judgment, discriminative feeling, mental activity, and even moral preference, are exercised only in making a choice. He who does anything because it is the custom, makes no choice. He gains no practice either in discerning or in desiring what is best. The mental and the moral, like the muscular powers, are improved only by being used.

This is an argument about formation. Mill is not saying that choosers make better decisions (the information argument). He is saying that choosing makes better choosers—people with developed faculties of perception, judgment, and what he calls “discriminative feeling,” the ability to tell the difference between what matters and what doesn’t. These faculties, like muscles, grow through exercise and atrophy through disuse. The quality of any particular decision matters less than what the process of deciding does to the person who decides.

Once you hear the music, Sunstein’s proposal sounds different.

Consider a person who consults a well-designed Choice Engine for a car purchase and accepts its recommendation. She may end up with a better car than she would have chosen on her own. Sunstein counts this as a welfare gain.

But if the evaluative work was done by the Engine, if she did not weigh the tradeoffs, interrogate her own priorities, or struggle with the uncertainty of not knowing what she really wants, then her faculties of judgment were not exercised. She received an outcome without undergoing the process that develops the capacity to arrive at such outcomes independently.

Do this once and the effect is trivial. Do this for every significant choice across a decade, and you have a person whose formal freedom of choice is perfectly intact, whose welfare as measured at each decision point is maximized, and whose capacity for independent evaluation has atrophied through sustained disuse. She can always opt out, but she no longer has the developed faculties that would make opting out a meaningful exercise of judgment.

Mill has a name for this person. “One whose desires and impulses are not his own, has no character, no more than a steam-engine has a character.”

She may be doing well by every metric Sunstein tracks. But the evaluative standards by which “well” is determined have migrated from her judgment to the algorithm. She is content without being, in any sense Mill would recognize, free.

Half of Hayek

Hayek’s case for markets rests on a claim about knowledge: both that markets aggregate it efficiently, and that participation in institutions like markets is what produces it.

Sunstein takes the first half and drops the second. He reads Hayek as making an argument about information processing, where markets aggregate dispersed knowledge more effectively than central planners. If AI can aggregate that knowledge even more effectively, the Hayekian argument for leaving people alone weakens.

But the knowledge Hayek cares about is not the kind of thing that can be aggregated. The knowledge of “the particular circumstances of time and place” is not sitting in a warehouse waiting to be collected. It comes into existence through the activity of people navigating uncertainty, bearing consequences, and adjusting. This is not a computational problem.

The structure in which choosing happens is itself doing something irreplaceable. Remove the individual from the process and you do not just lose one person’s contribution. You lose the conditions under which that person would have developed the knowledge worth contributing. The loss compounds.

AI would have to live your life to fully replace your epistemic role in it. But partial replacement may be enough to leave you unable to perform it yourself.

When Hayek writes that coercion “eliminates an individual as a thinking and valuing person and makes him a bare tool in the achievement of the ends of another,” Sunstein reads this as an argument against coercion, which Choice Engines avoid because they preserve opt-out. But read the sentence again. The operative phrase is not “coercion.” It is “eliminates an individual as a thinking and valuing person.” That elimination can happen without coercion. It can happen through substitution, through a system that does the thinking and valuing for you so effectively that your own faculties are never called upon.

Hayek’s nightmare was central planning, but his deepest fear was not the planner’s malice. It was the replacement of a distributed process of discovery with a system that delivers answers without requiring anyone to find them. Choice Engines are that system. No one is coerced, and no one means harm. And yet it is Hayek’s nightmare, built by people who thought they were honoring him.

The Missing Axis

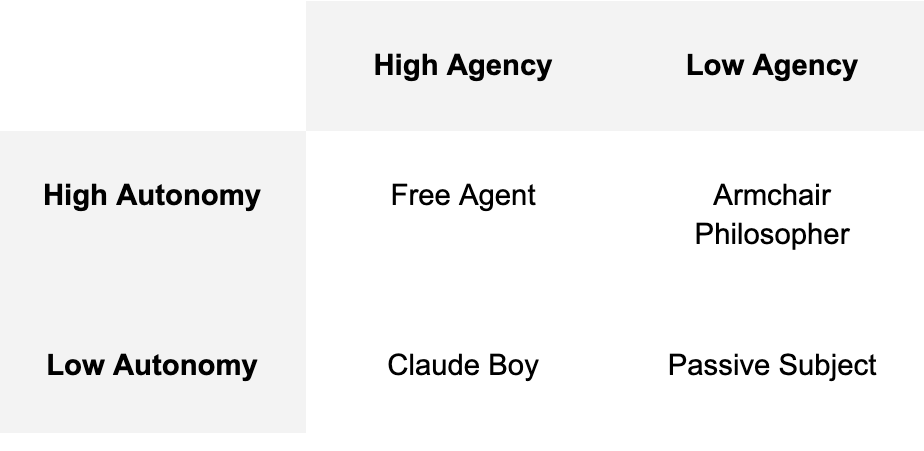

Sunstein’s paper operates on a single evaluative dimension. Every intervention is assessed by two criteria: does it improve welfare, and does it preserve freedom of choice? Both are real goods, but they sit on the same axis. Call it agency: the capacity to get what you want, measured by outcomes and options.

The axis Sunstein does not have is autonomy. Not autonomy in the loose sense of “having choices,” which is just agency again. Autonomy in the strict sense: self-rule. Authorship of the evaluative criteria by which options are assessed. A person has agency — agere, to act — when she can get what she wants. She has autonomy — auto nomos, self-rule — when she determines what to want, through her own exercised faculties of judgment in Mill’s terms, or through her own participation in the knowledge-generating processes Hayek describes.

These come apart. AI can increase agency while eroding autonomy. A Choice Engine that tells you what is best for you may be right. It may get you better outcomes. But if the standard by which “best” is determined has migrated from your judgment to the Engine, your autonomy has decreased. You are more powerful, but you are less free.

Sunstein’s paper treats both rows as one. He assumes that improving agency while preserving the option set is sufficient to protect autonomy.

His Choice Engines produce movement downward. Into the lower left. Claude Boys are powerful without being self-governing, formally free at every point, and yet not authoring the evaluative criteria by which a life is organized. Silicon Valley’s “you can just do stuff” brigade lives here, though it doesn’t know it.

This quadrant is easy to miss because it doesn’t look like a problem. It looks like liberal AI working exactly as designed. And all the while, the capacity that makes opting out meaningful is eroded, because it is no longer required.

Constitutional Drift

The phrase that does all the work in Sunstein’s paper is a counterfactual. Choice Engines steer people toward what they would choose if they were “adequately informed and free from behavioral biases.” The Engine corrects for departures from this idealized chooser.

But who defines the ideal? Whether something counts as a bias or a preference, adequate information or noise, a self-control failure or a legitimate present-oriented value: the answer, necessarily, is the Choice Engine. Which means the Engine is authoring the normative standard against which the person’s actual choices are evaluated. The person experiences this as help. She believes she is getting closer to what she really wants. But “what she really wants” is a construction of the system, not an independent fact about the person.

This is Constitutional drift, the migration of priority-setting from the person to the system. It is experienced from inside as self-improvement. The person feels she is becoming more rational. She is becoming more aligned with the Engine’s model of rationality. These feel identical from the inside, but may not be the same thing.

And the drift compounds. At time one, the person’s preferences are her own. She consults the Engine, accepts its recommendation, and her welfare improves. At time two, her preferences have been partially shaped by her previous Engine-assisted choices. By time ten, the convergence is nearly complete. The Engine recommends what she wants and she wants what the Engine recommends. The idealized chooser, “adequately informed and free from behavioral biases,” has become the Engine’s model of her rather than anything she authored herself.

This convergence is a ratchet. The more the Engine outperforms her independent judgment, the more rational it is to defer. The more she defers, the less her capacity is exercised, and the more the Engine outperforms her. At every point in this cycle, Sunstein’s framework reports success. It cannot represent the difference between a person whose welfare is high because she exercises excellent judgment and a person whose welfare is high because an Engine exercises excellent judgment on her behalf. They are in opposite positions with respect to their own freedom.

There is early evidence this is already happening. Bastani et al. (2025) found that high-school students using ChatGPT scored 48% higher on practice assignments and 17% lower on exams taken without it. The work improved but the learning did not. Becker et al. (2025) found that experienced developers using AI coding assistants were 19% slower on tasks while believing they were 24% faster, a 43-percentage-point gap between perceived and actual performance. Fernandes et al. (2025) reported that higher AI literacy correlated with worse metacognitive accuracy about one’s own AI-assisted performance. The people most embedded in AI-assisted work were most wrong about what the assistance was doing to their independent capacity.

The habit of deferring to a well-designed Engine is the same habit a manipulative one exploits. The person whose evaluative capacity has diminished through years of benign delegation is the person least equipped to detect when an Engine shifts from serving her interests to exploiting them. Sunstein’s liberal AI creates the prey that his illiberal AI hunts.

The effect will not be evenly distributed. People with strong habits of deliberation will use Choice Engines as tools, retaining evaluative authority while extracting information. People without that formation will not supplement their judgment but cede it. These populations map onto existing inequalities in parenting and education. The structural effect of Choice Engines may be to concentrate the capacity for self-rule in those who already have it. This is the deepest inequality, and the least measurable.

The Tutelary Power

Sunstein frames his paper with Mill and Hayek. The thinker he needs, and does not cite, is Tocqueville. In the final pages of Democracy in America, Tocqueville describes a tutelary power that

takes charge of assuring their enjoyments and watching over their fate. It is absolute, detailed, regular, far-seeing, and mild. It would resemble paternal power if, like that, it had for its object to prepare men for manhood; but on the contrary, it seeks only to keep them fixed irrevocably in childhood… It does not destroy, it prevents things from being born.

The Engine does not coerce and it does not break wills. It softens, bends, and directs. It seeks not to prepare persons for independent judgment but to keep them, gently and perpetually, in a state of epistemic childhood. The capacities at risk here are not taken. They go unexercised until they are gone.

Every argument in “Liberal AI” presupposes an author with intact evaluative capacity. Sunstein weighs Mill against Hayek, assesses the evidence on nudges, evaluates the risks of manipulation, reasons about what would constitute genuinely liberal AI. He is arguing from inside a house his proposal would slowly empty. But the capacities that make his paper important are the capacities that a generation raised on Choice Engines may never develop, because the Engines are so helpful, so liberal, and so respectful of freedom that the difficult and sometimes painful process of developing independent judgment is no longer necessary.

Mill understood this. The worst threat to liberty is not the tyrant who forbids you from thinking. It is the benevolent power that makes thinking unnecessary.

Sunstein’s Liberal AI is not an oxymoron. It is something more dangerous: a coherent program that achieves everything it promises while producing persons who can no longer do for themselves what it does for them. A generation raised on Choice Engines will never develop the evaluative capacity that was, in all prior human experience, the unavoidable byproduct of having to choose. We are building the conditions for that generation now.

The paper asks whether liberal AI can make life more free. It cannot ask what kind of persons will be left to be free, or whether freedom, for such persons, will mean anything at all.

Cosmos Institute is the Academy for Philosopher-Builders, technologists building AI for human flourishing. We run fellowships, fund fast prototypes, and host seminars with institutions like Oxford, Aspen Institute, and Liberty Fund.

Great piece, Brendan, thanks!

@Brendan McCord, this is one of the best essays I've read on AI and autonomy. You name what most AI criticism misses: the damage isn't in the bad outputs. It's in the good ones. The ones that work so well we stop doing the work ourselves.

I'm a therapist, and I see this in the room. The capacity you describe, what Mill calls "discriminative feeling," is not an abstraction. It lives in the body. We build it by sitting with uncertainty long enough for judgment to form. By tolerating the discomfort of not knowing what we want. By staying in the friction rather than reaching for the clean answer.

AI offers relief at the exact moment development requires staying. The output improves. The capacity shrinks. You call this "constitutional drift." In clinical terms, it is the industrialization of avoidance.

I recently wrote about this from the somatic side in The Splitting Machine: AI and the Failure of Integration

https://yauguru.substack.com/p/the-splitting-machine-ai-and-the?r=217mr3